LM101-085: Ch7: How to Guarantee your Batch Learning Algorithm Converges

Podcast: Play in new window | Download | Embed

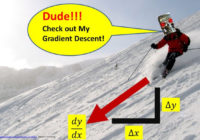

This 85th episode of Learning Machines 101 discusses formal convergence guarantees for a broad class of machine learning algorithms designed to minimize smooth non-convex objective functions using batch learning methods. In particular, a broad class of unsupervised, supervised, and reinforcement machine learning algorithms which iteratively update their parameter vector by adding a perturbation based upon all of the training data. This process is repeated, making a perturbation of the parameter vector based upon all of the training data until a parameter vector is generated which exhibits improved predictive performance. The magnitude of the perturbation at each learning iteration is called the “stepsize” or “learning rate” and the identity of the perturbation vector is called the “search direction”. Simple mathematical formulas are presented based upon research from the late 1960s by Philip Wolfe and G. Zoutendijk that ensure convergence of the generated sequence of parameter vectors. These formulas may be used as the basis for the design of artificially intelligent smart automatic learning rate selection algorithms. The material in this podcast is designed to provide an overview of Chapter 7 of my new book “Statistical Machine Learning” and is based upon material originally presented in Episode 68 of Learning Machines 101!